Learn Mongo(DB)

A resource to learn MongoDB provided by Justin Jenkins

Follow

Follow @LearnMongo on Twitter and Justin Jenkins on LinkedIn for tips, tricks and all that jazz

Courses

Join over 50,000 learners and watch the Learning MongoDB course on LinkedIn Learning

MongoQuest

Challenge your knowledge of MongoDB in MongoQuest, a simple game with example interview like questions

Latest Posts

- World Cup: Finding the Goal with $matchWith the World Cup starting this week there are going to be a lot of matches to watch for the next month or so and there will hopefully be a… Read more: World Cup: Finding the Goal with $match

- A (MongoDB) New Year’s ResolutionAnother New Year. Another reset. Another wave of optimism! Or, if you are a fan of Manchester United (like me), maybe not so optimistic. You’ve seen this pattern before. A… Read more: A (MongoDB) New Year’s Resolution

- Working with Geo Location Data in MongoDBMongoDB makes it really easy to work with location data (sometimes called Geo Data) by simplifying how to store this type of data and streamlining how you query for it… Read more: Working with Geo Location Data in MongoDB

- Full-Text Search in MongoDB with Relevance ScoresDo you know about MongoDB Text Search? Imagine we are building an app and want users to search by ingredients or dish names (like chocolate or zucchini bread) and get… Read more: Full-Text Search in MongoDB with Relevance Scores

- Clean Up Old Data Automatically with MongoDB TTL IndexesWith Big Data, comes well … a lot of data. But what if some of that data is only really useful within a certain timeframe? Think about things like: Luckily,… Read more: Clean Up Old Data Automatically with MongoDB TTL Indexes

- Scaling MongoDB with Sharding: Setup Best PracticesIn our previous post, we covered the basics of sharding (how MongoDB distributes data across multiple servers), why config servers are critical, and how balancing helps maintain efficiency. Now, we’ll… Read more: Scaling MongoDB with Sharding: Setup Best Practices

- Scaling MongoDB with Sharding: The BasicsWhen your database starts to outgrow its capacity, you’ve got two options: scale up or scale out. Scaling up means buying bigger, beefier servers with more RAM, CPU, and disk… Read more: Scaling MongoDB with Sharding: The Basics

- MongoDB Aggregations: Identifying Popular Ingredients with $unwind and $groupIn this series, we’re exploring different MongoDB aggregation operators by applying them to a collection of recipes. MongoDB Aggregations Series As part of our exploration we are imagining you’re building… Read more: MongoDB Aggregations: Identifying Popular Ingredients with $unwind and $group

- MongoDB Aggregations: Organizing Recipes by Meal Type with $groupIn this series, we’re exploring different MongoDB aggregation operators by applying them to a recipe collection. I hope you’ll follow along with each post! MongoDB Aggregations Series As part of… Read more: MongoDB Aggregations: Organizing Recipes by Meal Type with $group

- MongoDB Aggregations: Finding Cooking Times with $min and $maxIn this series we will explore different operators in the MongoDB Aggregation Framework via the context of a collection of recipes. MongoDB Aggregations Series Let’s say we’re making a brand… Read more: MongoDB Aggregations: Finding Cooking Times with $min and $max

- How to Change a MongoDB Primary to a SecondaryMaintaining a MongoDB Replica Set requires occasional maintenance and upgrades. Sometimes, this necessitates taking the primary node offline or converting it to a secondary node. Learn more about different Replica… Read more: How to Change a MongoDB Primary to a Secondary

- Understanding the local Database in MongoDBWhen you set up and initiate a replica set in MongoDB, all databases and collections on the primary database are replicated to the secondary nodes. However, there is one crucial… Read more: Understanding the local Database in MongoDB

- MongoDB.local NYC 2024Last week I had the opportunity to attend MongoDB.local NYC and help out at the MongoDB Community Booth. We had a fun “scavenger hunt” around the MongoDB Community website to… Read more: MongoDB.local NYC 2024

- Getting Started with MongoDB on Docker: A Step-by-Step GuideAre you eager to dive into MongoDB but unsure where to start? Docker provides a convenient way to set up MongoDB for local development, allowing you to experiment without the… Read more: Getting Started with MongoDB on Docker: A Step-by-Step Guide

- MongoDB Arrays: Using OperatorsMongoDB offers a versatile and powerful way to work with array data using field query operators. Arrays are commonly used to store various types of data, and MongoDB’s array query… Read more: MongoDB Arrays: Using Operators

- MongoDB’s “Hidden” Index FeatureIndexes are crucial for optimizing query performance, but they can also be very costly to create (meaning they can take a lot of CPU, especially if built from scratch on… Read more: MongoDB’s “Hidden” Index Feature

- Streamlining Document Updates with PipelinesWhile you might be familiar with MongoDB’s Aggregation Framework pipelines (primarily used for crafting intricate query results), a lesser-known capability is their application in updating documents. This article will delve… Read more: Streamlining Document Updates with Pipelines

- Document Schema ValidationMongoDB’s flexible document model allows for a wide range of data types within a collection, offering an vast amount of versatility. However, in certain scenarios, it becomes imperative to maintain… Read more: Document Schema Validation

- Query Plans: The BasicsOptimizing the performance of MongoDB relies heavily on effective querying. However, determining areas for optimization in a query can be challenging. This is where the concept of a query plan… Read more: Query Plans: The Basics

- Exploring MongoDB ConfigurationConfiguring MongoDB is a crucial step in optimizing its performance and tailoring it to the specific requirements of your application. MongoDB provides a configuration file that allows you to fine-tune… Read more: Exploring MongoDB Configuration

- Replica Sets: Member Roles and TypesMongoDB replica sets are a powerful way to ensure high availability and data redundancy in your database environment. While we won’t delve into all the intricate configuration options here, let’s… Read more: Replica Sets: Member Roles and Types

- Replica Sets: Why an Odd Number of Nodes?If you are using a Replica Set with your MongoDB setup you might be wondering why using an odd number of nodes (or members) is the best practice. After all… Read more: Replica Sets: Why an Odd Number of Nodes?

- MongoDB Arrays: Removing ElementsIn the world of MongoDB, arrays bring order to chaos and enable the efficient organization of data. Yet, there come times when the need arises to prune or refine the… Read more: MongoDB Arrays: Removing Elements

- MongoDB Arrays: SortingArrays are a fundamental data structure in MongoDB, allowing you to store and manage collections of items. However, there are times when the specific order of items within an array… Read more: MongoDB Arrays: Sorting

- MongoDB Arrays: The BasicsIn MongoDB arrays play a pivotal role in managing and organizing data. The capability to modify array items is crucial to adapt and evolve your datasets. MongoDB offers an array… Read more: MongoDB Arrays: The Basics

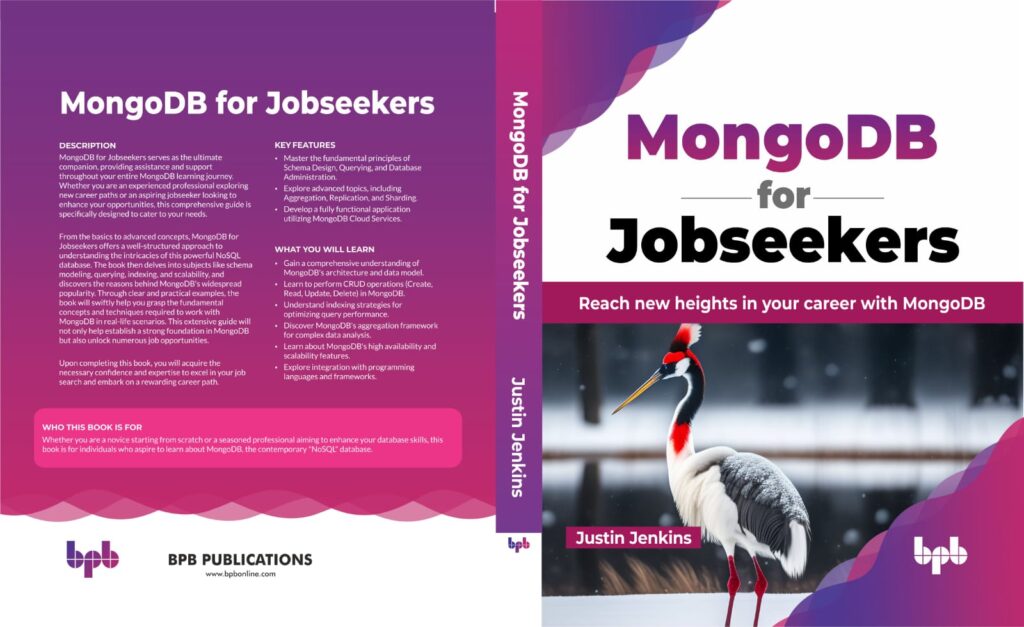

MongoDB for Jobseekers

A new book covering all aspects of MongoDB

See the Chapter Summaries, or a sample of the book here.

MongoDB for Jobseekers will be your comprehensive guide to mastering MongoDB, a powerful document database. This book is designed to equip job seekers and professionals with the necessary skills to excel in MongoDB-related roles and even help you prepare for job interviews.

Discover the reasons behind MongoDB’s popularity and learn how its unique document model sets it apart from traditional databases. Gain valuable insights into different job roles within the MongoDB ecosystem and identify the chapters that align with your specific career aspirations.

Start your MongoDB journey with step-by-step instructions for installing MongoDB Server on various operating systems, including Docker and MongoDB’s cloud service, Atlas. Familiarize yourself with essential tools like the MongoDB Shell, MongoDB Compass, and MongoDB’s official GUI, and unleash the true potential of MongoDB’s document storage capabilities.

MongoDB Podcast – MongoDB for Jobseekers

Dive into the world of document-oriented data storage and explore real-life examples that demonstrate how to structure and organize data effectively. Learn the ins and outs of creating, updating, and deleting documents, both individually and in bulk, and discover powerful querying techniques using MongoDB’s query operators.

Unleash the full potential of MongoDB’s document model by harnessing the power of complex data structures such as arrays and embedded documents. Master the art of querying and modifying these complex data types using MongoDB’s extensive array of operators.

Take your MongoDB skills to the next level with the Aggregation Framework, a powerful tool for manipulating and retrieving data. Learn how to construct efficient pipelines to obtain the precise information you need from your database.

Optimize the performance of your MongoDB deployment by understanding the importance of collections and indexes. Gain insights into different index types, collection configurations, and effective index creation and deletion strategies.

Effortlessly migrate data between databases and collections using MongoDB Compass, MongoDB Database Tools, and scripting methods. Learn the best practices for data import and export and explore techniques for transferring data between collections and databases.

Ensure the smooth operation of your MongoDB environment by configuring your server and effectively monitoring its performance. Troubleshoot common issues and leverage essential monitoring tools to ensure the stability and reliability of your MongoDB deployments.

Scale your MongoDB infrastructure seamlessly through replication and sharding techniques. Discover how to set up replica sets and leverage sharding to achieve horizontal scaling, empowering your applications to handle increased data loads.

Safeguard your MongoDB database with robust security measures such as authentication, authorization, and role-based access controls. Learn essential backup and restoration techniques and explore the world of database encryption to protect your valuable data.

Explore programming with MongoDB using popular languages like Python, Node.js (JavaScript), and PHP. Connect to MongoDB, perform basic queries, and leverage code samples provided in the book’s free GitHub Codespace.

Maximize your productivity with MongoDB’s essential tools, including the MongoDB Shell, mongosh, MongoDB Visual Studio Code extension, and MongoDB Playgrounds. Personalize your MongoDB experience and unlock advanced capabilities with MongoDB Compass, the official GUI for MongoDB.

Discover the power of MongoDB Atlas, the cloud services offered by MongoDB. Gain an overview of cloud hosting services, database tools, and key features like search and charts that can enhance your MongoDB experience.

Build a React app using MongoDB Atlas App Services, leveraging shared MongoDB clusters, Atlas Functions, and the Realm SDK for Web. Experience the ease of development without the need to maintain your own replica set or server.

Prepare for job interviews with fifty interview-like questions and their detailed responses. Covering various difficulty levels, these questions will equip you with the knowledge needed to impress potential employers.

Explore additional topics such as change streams, transactions in MongoDB, storing large files with GridFS, and a comparison between SQL queries and their MongoDB equivalents.

MongoDB for Jobseekers is your ultimate resource for acquiring comprehensive MongoDB skills. Equip yourself with the knowledge and confidence to excel in MongoDB-related roles and propel your career to new heights.